What is pirate metrics and why it calls pirate?

Pirate Metrics analyzes your business by tracking 5 key metrics, then helps you improve your product and increase your revenue. It’s the best analytics tool for your subscription business. AARRR!*

*pirates always scream AARRR!

Acquisition - users downloads mobile application, we have their attention

Activation - user performs some key activity that indicates a good first visit, i.e. user enjoys first visit, happy experience

Retention - user continues to do that key activity indicating they like your mobile app

Revenue - user pays you

Reengagement - users has left the product without activation or not converted to real without deposit

Currently we have to trackers:

- Adjust, because it helps us to run campaigns in facebook and twitter

- Adobe Mobile Analytics because it is part of Adobe SiteCatalyst

There is top architecture of solution:

I collect data from adjust and adobe in redshift. Using Pentaho Data Integration, I run daily job which extract data from adjust table, which store in OLAP and in the same time run another job which take 2 files from adobe ftp, one for IOS and another for Android. All data goes to Redshift in staging are in separate table. In addition, I load data from DWH with deposits, costs and revenue amount.

Main challenge is to combine both trackers, because they have varius nature of data, various timezones and etc. I solved it for daily qty, using SQL. It is simple math. As etalon tracker I chose Adjsut, because it is more precise. Then we just calculate sum of installs per day for every channels and operationa system for Adjust. From Organic channel in adjust we should minus quanty of adobe install without adobe organic. As a result we get install by channels.

Activation - user performs some key activity that indicates a good first visit, i.e. user enjoys first visit, happy experience

Retention - user continues to do that key activity indicating they like your mobile app

Revenue - user pays you

Reengagement - users has left the product without activation or not converted to real without deposit

ETL process

I created 2 jobs. One for loading stg.stg_adobe and another one for loading stg.stg_adjust.

Loading stg_adjust

Run Pentaho DI

Pentaho home path: /home/dasboard/Downloads/data-integration

in order to start pentaha use terminal and run spoon.sh

Transformation 1: stg_adjust

This transformation extract data from mob.adjust for last 7 days and send it to s3://s3/webanalys/adjust_data in gzip format.

Transformation 2: sql_adjust

This transformation run sql queries:

--copy data from file to stg.stg_temp_adjsut

copy stg.stg_temp_adjust from 's3://webanalys/adjust_data'

credentials

'aws_access_key_id=<key>;aws_secret_access_key=<key>'

delimiter '|'

timeformat 'YYYY-MM-DD HH24:MI:SS'

gzip;

--delete data from stg.stg_adjust in order to write new fresh data

DELETE FROM stg.stg_adjust where stamp>= (SELECT MIN(stamp) FROM stg.stg_temp_adjust);

INSERT INTO stg.stg_adjust (SELECT * FROM stg.stg_temp_adjust);

--optimaze stg_adjsut table

analyze stg.stg_adjust;

vacuum stg.stg_adjust;

--delete from temp table all data

DELETE from stg.stg_temp_adjust;

vacuum stg.stg_temp_adjust;

credentials

'aws_access_key_id=<key>;aws_secret_access_key=<key>'

delimiter '|'

timeformat 'YYYY-MM-DD HH24:MI:SS'

gzip;

DELETE FROM stg.stg_adjust where stamp>= (SELECT MIN(stamp) FROM stg.stg_temp_adjust);

INSERT INTO stg.stg_adjust (SELECT * FROM stg.stg_temp_adjust);

analyze stg.stg_adjust;

vacuum stg.stg_adjust;

DELETE from stg.stg_temp_adjust;

vacuum stg.stg_temp_adjust;

Job: jb_adjsuttoAWS

Kitchen job

Pentaha allow as to schedule jobs via kitchen. Using crontab I run my job. Moreover, kitchen can perform only shell script. As a result I put my job in shell script:

#!/bin/bash

/home/dasboard/Downloads/data-integration/kitchen.sh -file=/home/dasboard/Downloads/pentaho_di_files/jb_adjusttoAWS.kjb -level=Minimal

/home/dasboard/Downloads/data-integration/kitchen.sh -file=/home/dasboard/Downloads/pentaho_di_files/jb_adjusttoAWS.kjb -level=Minimal

Loading stg_adobe

In the same time, we should load data from Adobe mobile to redshift in separate table.

By default adobe allow us to use "datawarehouse" option in order to send data to adobe FTP.

Using pebtaho data integration, I can go to FTP, grab file and put it in local folder, then unzip and start to transform it and then send to S3 in order to incrementaly load in staging table for adobe. There is a job:

Let's look on transformation, which transform csv file and send it to S3. The main problem of adobe datawarehouse it it date format, like "10 November, 2015". It is not so comfortable for database. That's why with pentaho I quickly fix it:

And finaly, I run copy command in redshift:

copy stg.stg_temp_adobe from 's3://webanalys/ios7days'

credentials 'aws_access_key_id=<key>;aws_secret_access_key=<key>'

delimiter '|'

timeformat 'YYYY/MM/DD HH24:MI:SS'

gzip;

DELETE FROM stg.stg_adobe where app_os='ios' and visit_date>= (SELECT MIN(visit_date) FROM stg.stg_temp_adobe WHERE app_os='ios');

INSERT INTO stg.stg_adobe (SELECT * FROM stg.stg_temp_adobe WHERE app_os='ios');

analyze stg.stg_adobe;

vacuum stg.stg_adobe;

DELETE from stg.stg_temp_adobe where app_os='ios';

vacuum stg.stg_temp_adobe;

This example for IOS, the same is for android.

When we collect all data in stageing, we can build datamart in order to combine data from both trackers and enrich it with sales data.

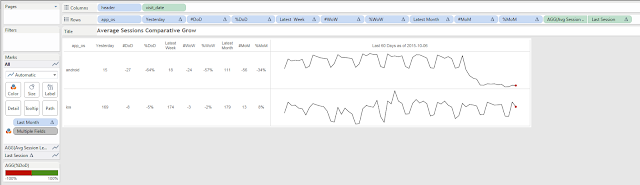

Let's look on pirate metric, which I visualized with Tableau.

In the same time, we should load data from Adobe mobile to redshift in separate table.

By default adobe allow us to use "datawarehouse" option in order to send data to adobe FTP.

Using pebtaho data integration, I can go to FTP, grab file and put it in local folder, then unzip and start to transform it and then send to S3 in order to incrementaly load in staging table for adobe. There is a job:

Let's look on transformation, which transform csv file and send it to S3. The main problem of adobe datawarehouse it it date format, like "10 November, 2015". It is not so comfortable for database. That's why with pentaho I quickly fix it:

And finaly, I run copy command in redshift:

copy stg.stg_temp_adobe from 's3://webanalys/ios7days'

credentials 'aws_access_key_id=<key>;aws_secret_access_key=<key>'

delimiter '|'

timeformat 'YYYY/MM/DD HH24:MI:SS'

gzip;

DELETE FROM stg.stg_adobe where app_os='ios' and visit_date>= (SELECT MIN(visit_date) FROM stg.stg_temp_adobe WHERE app_os='ios');

INSERT INTO stg.stg_adobe (SELECT * FROM stg.stg_temp_adobe WHERE app_os='ios');

analyze stg.stg_adobe;

vacuum stg.stg_adobe;

DELETE from stg.stg_temp_adobe where app_os='ios';

vacuum stg.stg_temp_adobe;

This example for IOS, the same is for android.

When we collect all data in stageing, we can build datamart in order to combine data from both trackers and enrich it with sales data.

Let's look on pirate metric, which I visualized with Tableau.